Deploy to Staging Slot now. Now repeat the process for Staging Slot but remember to modify the content of Web page, for example – I would change Production to Staging. Another change will from the steps above to deploy to Staging slot. Swap the Azure WebApp Deployment slot now. Now we have two versions of Web Application running on separate. Update Azure Web Apps using deployment slots From the course: Microsoft Azure: Design Azure Web and Mobile Apps Start my 1-month free trial. During the swap operation the site in the staging slot is warmed up by making an HTTP request to its root directory. More detailed explanation of that process is available at How to warm up Azure Web App during deployment slots swap. By default the swap will proceed as long as the site responds with any status code. Deployment Slot support within the Application Gateway The Web App deployment slots are a great feature, really useful however, they don't really work elegantly when the site is protected by an application gateway. Working with Azure Web Apps Deployment Slots Back when we all used Azure Cloud Services for running websites and backend worker tasks, we had the fabulous concept of deployment slots. Since January 2014, this concept was also introduced in the offering than called Azure Websites. Now, in Azure App Services Web Apps, the concept is still alive.

- Azure App Service Deployment Slots

- Azure Website Deployment Slots Tool

- Azure Website Deployment Slots No Deposit

- Azure Development Slots

- Azure Website Deployment Slots Software

- Azure Devops Deployment Slots

Deploying your application automatically on an Azure Web App isn't really challenging. You have several options to do it (including Web Deploy, Git, FTP...); and several release management services (like Visual Studio Team Services) provide automated tasks for this. But you might run into some issues if you just follow the easy way.

The shortest way is still not the best way

You can deploy your application straight to your Azure Web App, but here are the problems you might run into:

- Your deployment package might be large, so the deployment process would be long enough to introduce significant downtime to your application.

- After the deployment, the Web App might need to restart. This results in a cold start: the first request will be slower to process and multiple requests may stack up waiting for the Web App to be ready to process them.

- Your deployment may fail due to files being used by the server processes.

- If your last deployment introduces critical issues, you have no way to quickly rollback to the previous version.

All of these are worth considering if you are alone in your team, and deploy your application to your own private test Web App. But it isn't exactly the purpose of cloud computing, is it?

I mean, even if you are targeting an integration environment, only used by your kind frontend colleague, you don't want him to hit a brick wall, waiting for you to fix your API if something got wrong.

And if you are targeting a validation environment, would you like to have your QA open a non-reproductible issue due only to the deployment downtime?

And if you are deploying to your producti... OK, I think you got my point here, let's fix it!

Blue-Green deployment

Blue-Green deployment is a release approach to reduce downtime by ensuring you have two identical production environments: Blue and Green. At any time one of them is live, serving all production traffic, while the other is just idle.

Let's say that Blue is currently live.

You can deploy your new release in the environment that is not live, Green in our example. Once your deployment is finished, and your application is ready to serve requests, you can just switch the router so all incoming requests now go to Green instead of Blue.

Green is now live, and Blue is idle.

Credits: BlueGreenDeployment by Martin Fowler.

As well as reducing downtime, the Blue-Green deployment approach reduces risks: if your last release on Green produces unexpected behaviors, just roll back to the last version by switching back to Blue.

Deployment slots to the rescue!

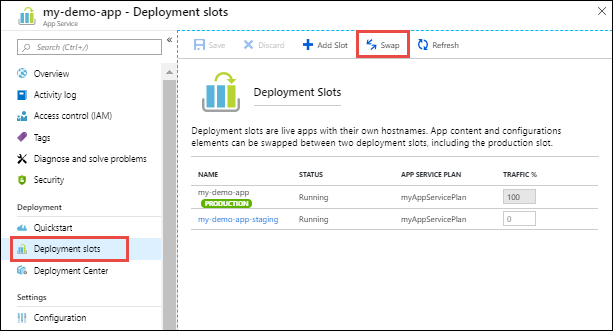

The great news is that you have a nice way to implement the Blue-Green deployments for Azure Web Apps: deployment slots (available in the Standard or Premium App Service plan mode).

What are deployment slots?

By default, each Azure Web App has a single deployment slot called production which represents the Azure Web App itself.

You can then create more deployment slots. They are actually live Web App instances with their own hostnames, but they are tied to the main Web App.

In fact, if:

- I have a Web App:

- called

geeklearning, - with default hostname

geeklearning.azurewebsites.net, - and I create a slot:

- named

staging, - its hostname will be

geeklearning-staging.azurewebsites.net.

You can easyly swap two slots, including the production one. Swapping is not about copying the content of the website but, as the Blue-Green approach recommends it, it is more about swapping DNS pointers.

When dealing with deployment slots, you should also be aware of how configuration works.

Indeed, some configuration elements will follow the content across a swap while others will stay on the same slot.

Particulary, the app settings and connection strings are swapped by default, but can be configured to stick to a slot.

Settings that are swapped:

Azure App Service Deployment Slots

- General settings - such as framework version, 32/64-bit, Web sockets

- App settings (can be configured to stick to a slot)

- Connection strings (can be configured to stick to a slot)

- Handler mappings

- Monitoring and diagnostic settings

- WebJobs content

Settings that are not swapped:

- Publishing endpoints

- Custom Domain Names

- SSL certificates and bindings

- Scale settings

- WebJobs schedulers

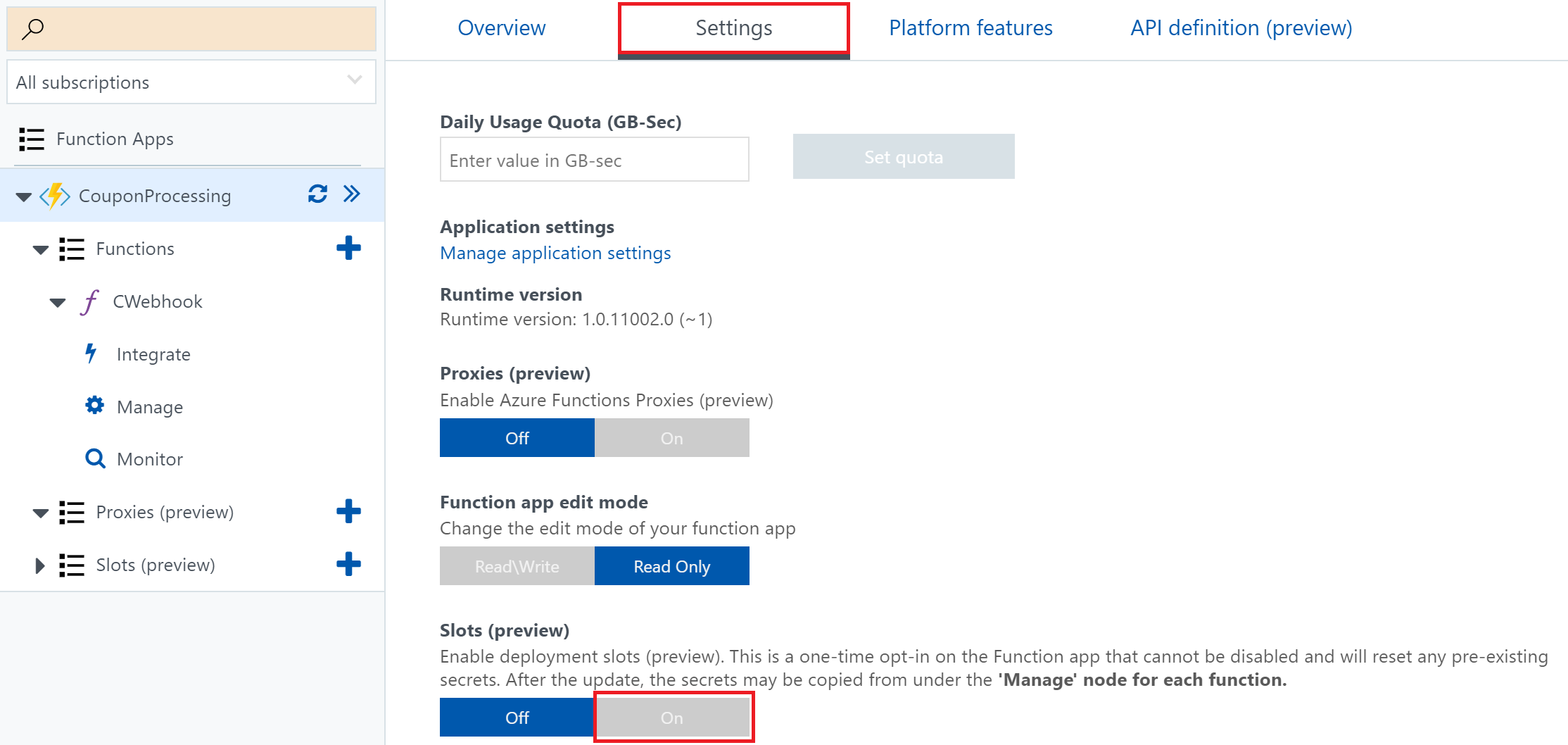

Create a new slot

To create a new deployment slot, just open your Web App from the Azure Portal and click on Deployment slots, and then Add Slot.

You can choose to clone the configuration from an existing slot (including the production one), or to start with a fresh new configuration.

Once your slot has been created, you can configure it like a normal Web App, and eventually declare some settings as 'Slot setting' to stick it to your new slot.

Successful deployment process

Now that you implement the Blue-Green deployments through Azure Web App Deployment Slots, you resolved almost all issues listed before.

The last one left is that your deployment may fail if a file is currently locked by a process on the Web App. To ensure that this will not happen, it seems safer to stop the Web server before deploying and start it again just after. As you are deploying on a staging slot, there is no drawback doing this!

So, the whole successful deployment process comes down to:

- Stop the staging slot

- Deploy to the staging slot

- Start the staging slot

- Swap the staging slot with the production one

You may leave the staging slot running after the deployment process, to let you swap it back in case of any issues.

Azure Website Deployment Slots Tool

Deploy from Visual Studio Team Services

Update: there is an easier way to deploy from VSTS now: learn how!

Implementing this deployment process in a VSTS Build or Release definition could be a little bit challenging: as you have a default build task to deploy your application to an Azure Web App, even to a specific slot, you can't stop, swap or start slots.

Fortunately, you can run PowerShell scripts, and even get them to use an Azure connection configured for your Team Project thanks to the Azure Powershell task.

You then just have to write three PowerShell scripts using the PowerShell Modules for Azure.

Stop Azure Web App Slot

Create a new PowerShell script named StopSlot.ps1, write the following content, and publish it to your source repository:

Start Azure Web App Slot

Create a new PowerShell script named StartSlot.ps1, write the following content, and publish it to your source repository:

Swap Azure Web App Slots

Create a new PowerShell script named SwapSlots.ps1, write the following content, and publish it to your source repository:

Add PowerShell scripts to your build definition

You are now ready to edit your Build or Release definition in VSTS to support your successful Azure Web App deployment process:

- Add the three Azure Powershell tasks, one before your Azure Web App Deployment task and two just after

- Configure your tasks

- Azure Connection Type: Azure Classic

- Azure Classic Subscription: Select your configured Azure Classic service (you should have this already configured if you deployed your application to Azure, otherwise learn how to configure it on the VSTS documentation)

- Script Path: Select the path to your script (from sources for a Build, or artifacts for a Release)

- Scripts Arguments: Should match the parameters declared in your scripts, like

-WebAppName 'slots-article' -SlotName 'staging'

You have now a real successful Azure Web App deployment process, automated from Visual Studio Team Services, without downtime or cold start, strong and safe!

Updated: change screenshots to match VSTS and Azure new UIs, add a link to an easier successful Azure Web App deployment process from VSTS

I should start this post by apologizing for getting terminology wrong. Microsoft just renamed a bunch of stuff around Azure WebSites/Web Apps so I’ll likely mix up terms from the old ontology with the new ontology (check it, I used “ontology” in a sentence, twice!). I will try to favour the new terminology.

On my Web App I have a WebJob that does some background processing of some random tasks. I also use scheduler to drop messages onto a queue to do periodic tasks such as nightly reporting. Recently I added a deployment slot to the Web App to provide a more seamless experience to my users when I deploy to production, which I do a few times a day. The relationship between WebJobs and deployment slots is super confusing in my mind. I played with it for an hour today and I think I understand how it works. This post is an attempt to explain.

If you have a deployment slot with a webjob and a live site with a webjob are both running?

Yes, both of these two jobs will be running at the same time. When you deploy to the deployment slot the webjob there is updated and restarted to take advantage of any new functionality that might have been deployed.

My job uses a queue, does this mean that there are competing consumers any time I have a webjob?

Azure Website Deployment Slots No Deposit

If you have used the typical way of getting messages from a queue in a webjob, that is to say using the QueueTrigger annotation on a parameter:

then yes. Both of your webjobs will attempt to read this message. Which ones gets it? Who knows!

Doesn’t that kind of break your functionality if you’re deploying different functionality for the same queue giving you a mix of old and new functionality?

Azure Development Slots

Yep! Messages might even be processed by both. That can happen in normal operation on multiple nodes anyway which is why your jobs should be idempotent. You can either turn off the webjob for your slot or use differently named queues for production and your slot. This can then be configured using the new slot app settings. To do this you need to set up a QueueNameResolver, you can read about that here

Azure Website Deployment Slots Software

What about the webjobs dashboard, will that help me distinguish what was run?

Kind of. As far as I can tell the output part of this page shows output from the single instance of the webjob running on the current slot.

Azure Devops Deployment Slots

However the functions invoked list shows all invocations across any instance. So the log messages might tell you one thing and the function list another. Be warned that whey you swap a slot the output flips from one site to another. So if I did a swap on this dashboard and then refreshed the output would be different but the functions invoked list would be the same.